Overview

The idea behind the platform.ai mobile app is to help users test their deep learning models in real-time using their smartphones. After creating a new project on platform.ai, users can use their smartphone camera or gallery to select a picture and classify it using their own deep learning models on platform.ai.

App Development Process

The app was built using React native so that it could be distributed on both the Android and iOS platforms using a single repository of code. Further, the functionality of the app didn’t require any complex native features which made the decision easier for us. For react native development, we used the expo framework as the development environment. The expo website appropriately says that it is ‘The fastest way to build apps’. With Expo tools, services, and React Native, we can build, deploy, and quickly iterate on native iOS and Android apps from the same JavaScript codebase.

App Installation for Developers

$ git clone https://github.com/fellowship/expo-prediction.git

$ cd expo-prediction

$ npm install # Install all the required packages in package.json in the repo.

$ expo start # launches the app on localhost

Now, you can open the expo-prediction repo in any code editor and modify the code and the changes would immediately be reflected on your simulator or smartphone.

Important Project Files

- app.json: It contains the expo configurations (app name, slug, sdk version, platforms, etc.) which are required during the build process. Information related to the orientation, icons and splash screen is stored here.

- package.json: It contains all the dependencies (npm packages) information. This is used later to install the packages (npm install) used in the project.

- components/: This directory contains different components using the project.

- assets/: This contains the icons, splash screen, and images used in the project.

- components/Home.js: Contains the home screen component.

- components/TestModel.js: Contains the TestModel screen component.

- App.js: The Root component of the app. Initializes a StackNavigator and adds Home and Test Model screen on it.

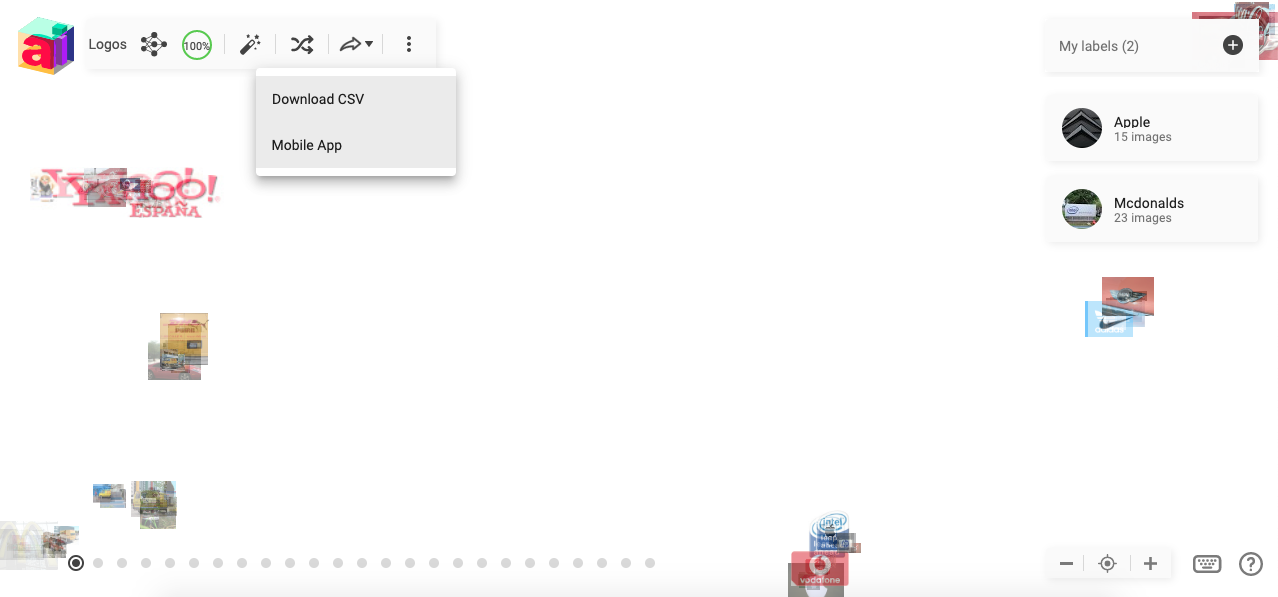

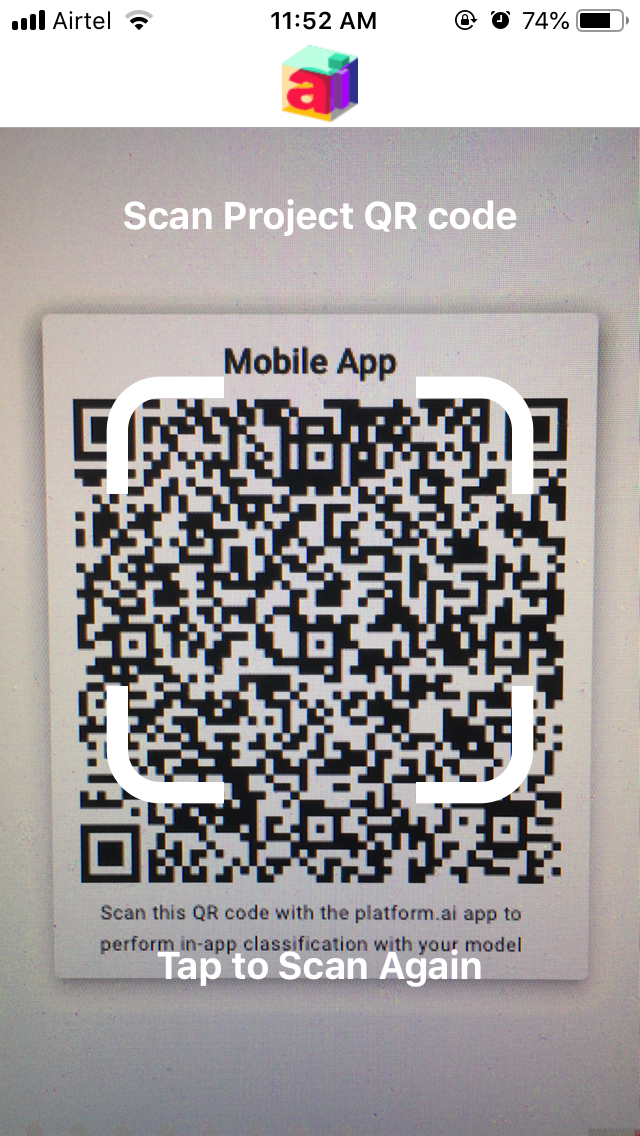

Home Screen

We have used the BarCodeScanner component out-of-the-box from the expo sdk. Further, we have used AppLoading to preload and cache assets beforehand for better user experience. On running for the first time, the app asks for user permissions to access the camera and gallery. Only, if the permission is provided, will it show the QRcode scanner screen. Once the user scans the QR code which has the project configuration, there is a test for its correctness and if it passes the test, we navigate (using react-navigation) to the TestModel screen and pass on the project configuration using the navigation state variable.

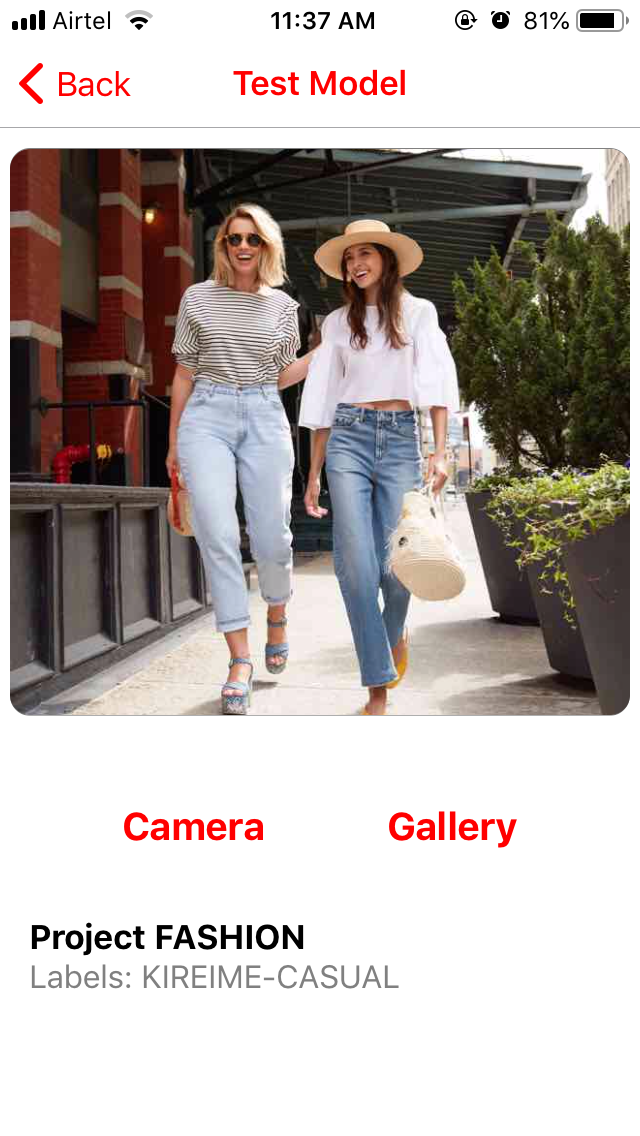

Test Model Screen

We use ImagePicker component from the expo sdk to choose pictures from Camera or Gallery and have provided 2 separate buttons for each case. Using ImagePicker API we extract the base64 format of the selected image. Before sending a request to platform.ai servers, we create a JSON object and store relevant “values” into “keys”.

The access token is provided in the project configuration (QR code) on the home screen. On selecting the image, it automatically starts the process of classifying the image.

Predicting Class Labels

The app can test a single image for multiple deep learning models as requested in the project QR code. The processing of multiple models is concurrent. Although, it shows the class labels only after collating the responses from the different APIs. This is achieved using Javascript Promises.

Handling Errors

We have tried to handle all the possible errors that could occur during the classification process. In our testing, we have these most common errors that could occur:

404: Server not found

413: Image Size too Large

500: Internal Server Error

502: Bad Gateway

503: Service Unavailable

In the case of successful classification from the server, it sends a 200 status code. Otherwise, any of the above error codes are sent. We have created a dictionary of all the HTTP errors to display the relevant message accordingly to the user.

We are soon going to release the platform.ai mobile app on the respective app stores.